AI Agent Deployment Strategies: From Development to Production at Scale

Learn proven deployment strategies for AI agents, from local testing to production at scale. Covers infrastructure, monitoring, rollback procedures, and real-world deployment patterns.

Deploying AI agents to production is fundamentally different from deploying traditional software. AI agents are autonomous, stateful, and interact with unpredictable environments—making deployment strategies critical to success.

Whether you're deploying a single customer service agent or coordinating a fleet of autonomous agents, this guide covers proven AI agent deployment strategies that minimize risk and maximize reliability.

What Are AI Agent Deployment Strategies?

AI agent deployment strategies are systematic approaches to moving AI agents from development to production environments. Unlike traditional software deployments, AI agents require:

- State management across deployments

- Continuous monitoring of agent behavior

- Gradual rollout mechanisms

- Rollback procedures for autonomous systems

- Environment-specific configurations for LLM endpoints, tools, and permissions

A robust deployment strategy ensures your AI agents work reliably in production while minimizing disruption to users.

Why AI Agent Deployment Strategies Matter

Poor deployment practices are the #1 cause of AI agent failures in production:

- Autonomy risk: Agents can make unexpected decisions in new environments

- Cost exposure: Production API keys can rack up costs if not properly managed

- Data sensitivity: Agents may access different data in production vs. development

- Integration complexity: Real-world systems have dependencies that staging can't replicate

Companies that invest in deployment strategies see 3-5x fewer production incidents and faster iteration cycles.

Core AI Agent Deployment Patterns

1. Blue-Green Deployment for AI Agents

Maintain two identical production environments (blue and green):

- Deploy new agent version to the inactive environment

- Run validation tests against real data

- Switch traffic to new environment

- Keep old environment as instant rollback

Best for: Customer-facing agents where downtime is unacceptable.

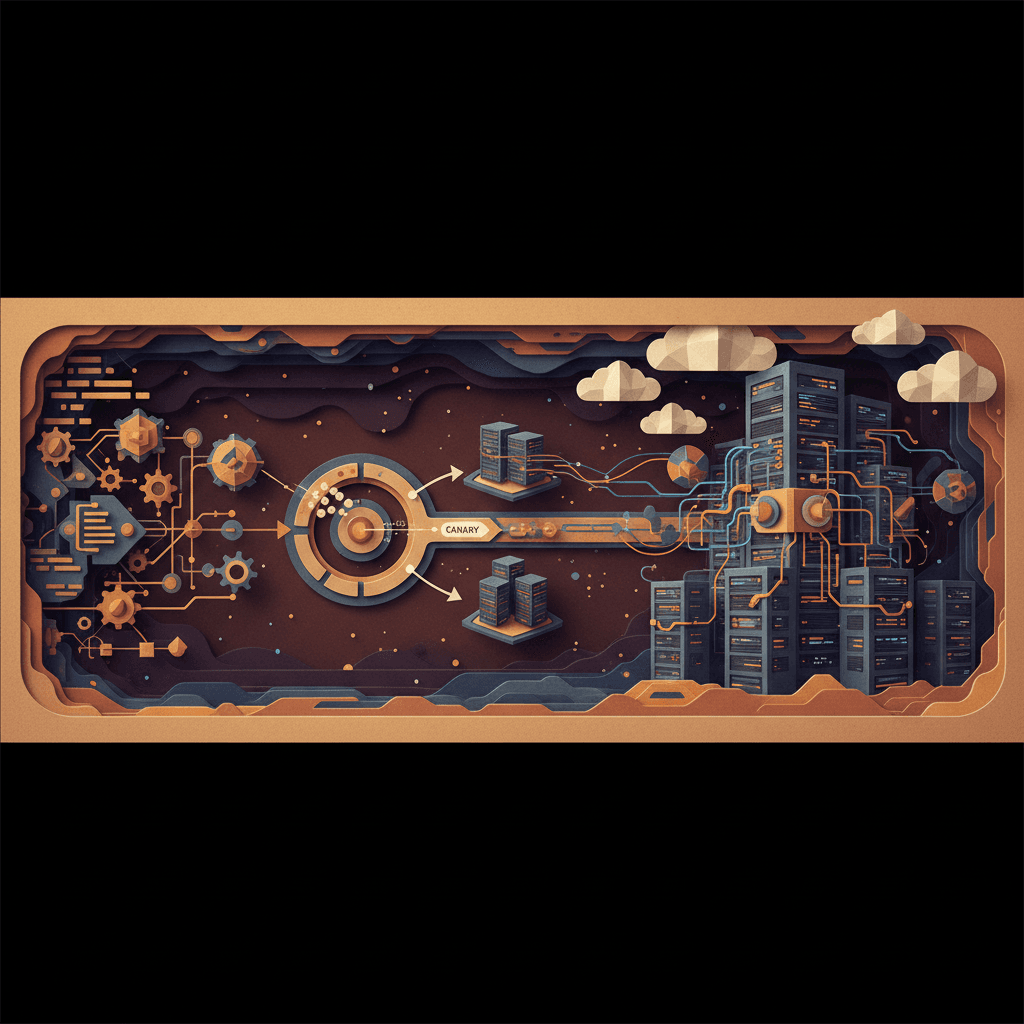

2. Canary Deployment

Gradually roll out new agent versions:

- Deploy to 5% of traffic

- Monitor error rates, latency, and user satisfaction

- Increase to 25%, 50%, 100% over hours or days

- Automatic rollback if metrics degrade

Best for: Autonomous agents where you want to validate behavior on real workloads.

For more on monitoring agent behavior, see our guide on AI agent observability.

3. Shadow Deployment

Run new agent version alongside production without affecting users:

- Mirror production traffic to new agent

- Compare outputs and decisions

- Identify discrepancies before full deployment

- Zero risk to users

Best for: High-stakes agents (financial, medical, legal) where errors are costly.

4. Feature Flag Deployment

Control agent features via configuration:

- Deploy code with features disabled

- Enable features gradually per user segment

- A/B test different agent behaviors

- Instant disable if issues arise

Best for: Multi-tenant systems or agents with experimental features.

AI Agent Deployment Checklist

Before deploying any AI agent to production:

Pre-Deployment

- Test agent in staging with production-like data

- Verify API rate limits and cost controls

- Confirm tool permissions are production-appropriate

- Review agent decision logs for unexpected behavior

- Set up monitoring alerts (error rates, latency, cost)

- Document rollback procedure

- Test rollback procedure

During Deployment

- Deploy during low-traffic window

- Monitor agent decisions in real-time

- Track error rates vs. baseline

- Verify integrations (databases, APIs, tools)

- Check cost metrics (LLM API usage)

Post-Deployment

- Monitor for 24-48 hours

- Review agent decision quality

- Analyze user feedback

- Document any unexpected behaviors

- Update runbooks with learnings

For cost monitoring strategies, check out our AI agent cost optimization guide.

Infrastructure Considerations for AI Agent Deployment

Container Orchestration

Kubernetes and Docker are ideal for AI agents:

- Isolate agent environments

- Scale agents horizontally

- Manage secrets (API keys) securely

- Rolling updates with health checks

Serverless Deployment

AWS Lambda, Google Cloud Functions for event-driven agents:

- Cost-effective for intermittent workloads

- Auto-scaling without infrastructure management

- Cold starts can impact latency

Edge Deployment

Deploy lightweight agents close to users:

- Reduced latency for conversational agents

- Data privacy (process locally)

- Works offline or in low-connectivity environments

Common AI Agent Deployment Mistakes to Avoid

1. Skipping Staging Environments

Mistake: Deploy directly from development to production.

Risk: Agents behave unexpectedly with real data, real APIs, and real user interactions.

Solution: Always test in staging with production-like conditions.

2. Ignoring State Management

Mistake: Treat agents as stateless services.

Risk: Lose conversation history, user context, or in-progress tasks during deployment.

Solution: Persist agent state to databases; gracefully migrate state during updates.

3. No Cost Guardrails

Mistake: Deploy without API usage limits.

Risk: Runaway agent loops can generate thousands of LLM calls.

Solution: Implement per-agent, per-user, and per-time-period rate limits.

4. Poor Observability

Mistake: Deploy without logging agent decisions and tool calls.

Risk: Can't debug issues or understand why agents failed.

Solution: Log every agent decision, tool call, and outcome.

5. No Rollback Plan

Mistake: Assume deployments will work perfectly.

Risk: When (not if) issues arise, no way to quickly revert.

Solution: Test rollback before deployment; automate rollback triggers.

AI Agent Deployment Security

Production AI agents need strict security controls:

- API key rotation: Separate keys for dev, staging, production

- Least-privilege tools: Agents should only access necessary APIs

- Audit logging: Track every agent action for compliance

- Secrets management: Never hardcode credentials

- Network isolation: Limit agent network access

Learn more in our AI agent security guide.

Monitoring AI Agents Post-Deployment

Key metrics to track:

- Success rate: % of tasks completed successfully

- Latency: Time from user request to agent response

- Cost per interaction: LLM API costs per user session

- Error rate: Failed tool calls, timeouts, exceptions

- User satisfaction: Feedback scores, retry rates

Set up alerts for:

- Error rate > 5%

- Latency > 10 seconds (95th percentile)

- Cost spike > 2x baseline

- Success rate drop > 10%

Conclusion

AI agent deployment strategies are critical to production success. Unlike traditional software, agents are autonomous and can make unexpected decisions—making gradual rollouts, monitoring, and rollback procedures essential.

By following deployment patterns like canary releases, blue-green deployments, and feature flags, you can confidently deploy AI agents while minimizing risk.

Build AI That Works For Your Business

At AI Agents Plus, we help companies move from AI experiments to production systems that deliver real ROI. Whether you need:

- Custom AI Agents — Autonomous systems that handle complex workflows, from customer service to operations

- Rapid AI Prototyping — Go from idea to working demo in days using vibe coding and modern AI frameworks

- Voice AI Solutions — Natural conversational interfaces for your products and services

We've built AI systems for startups and enterprises across Africa and beyond.

Ready to explore what AI can do for your business? Let's talk →

About AI Agents Plus Editorial

AI automation expert and thought leader in business transformation through artificial intelligence.