Anthropic vs. Pentagon: The First Real Test of AI Industry Autonomy

Anthropic refused the Pentagon's 'any lawful use' demand and got designated a supply chain risk. OpenAI took a different path. This standoff isn't just about one company—it's a template for how AI labs will negotiate with governments.

The Pentagon just designated Anthropic as a "supply chain risk" after the AI lab refused to accept broad military deployment terms. Meanwhile, OpenAI cut a deal allowing the Department of Defense to deploy its models on classified networks. This isn't just corporate drama—it's the opening move in a much bigger game about who controls the future of AI.

What Actually Happened

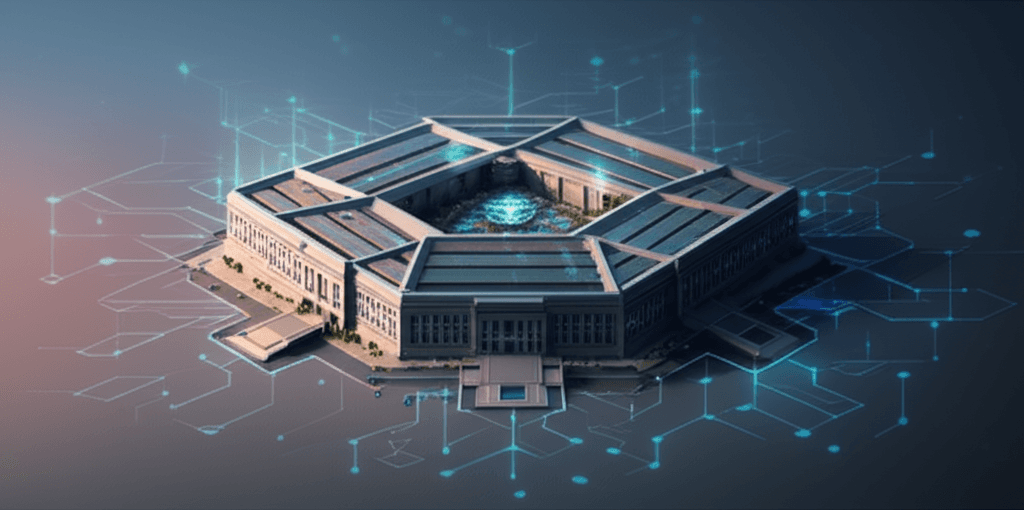

The Department of Defense demanded that Anthropic agree to allow "any lawful use" of its AI models, including deployment in military systems. Anthropic said no. The Pentagon responded by designating the company as a supply chain risk and threatening to invoke the Defense Production Act—essentially threatening partial nationalization.

Former Trump advisor Dean Ball called it "attempted corporate murder" on X, warning that this move could have a chilling effect across the entire AI industry. Alan Rozenshtein, a former DOJ official specializing in technology law, told Politico this could be the first step toward partial nationalization of AI companies.

OpenAI took a different route. CEO Sam Altman announced a new agreement with the Pentagon that allows military deployment on classified networks but includes explicit prohibitions on domestic mass surveillance and requirements for "human responsibility for the use of force, including for autonomous weapon systems." Altman publicly called for the DoD to offer these same terms to all AI companies.

Why Anthropic Drew a Red Line

Anthropic has positioned itself as the "safety-first" AI lab since its founding by former OpenAI researchers who left over disagreements about the company's direction. Accepting unlimited military deployment terms would contradict everything the company has publicly stood for.

But there's more to it than brand consistency. Anthropic recently made waves by describing Claude as "a new kind of entity" that might possess some form of consciousness. Whether you buy that claim or not, it signals that the company is thinking seriously about the moral status of AI systems—and by extension, the moral responsibility of deploying them.

Accepting "any lawful use" terms means you're comfortable with whatever falls within the ever-shifting boundaries of military law. For a company wrestling with questions about AI consciousness and moral status, that's not a trivial ask.

OpenAI's Middle Path

OpenAI's deal is more interesting than it looks at first glance. By negotiating specific constraints—no domestic surveillance, human-in-the-loop for weapons systems—Altman managed to thread a needle: work with the military without writing a blank check.

That's a playbook. And Altman's public statement that these same terms should be offered to all AI companies is strategic positioning. He's saying: if you want AI companies to work with you, here's what reasonable terms look like.

Even Ilya Sutskever, who left OpenAI to start Safe Superintelligence after the Sam Altman ouster drama, posted his approval: "It's extremely good that Anthropic has not backed down, and it's significant that OpenAI has taken a similar stance. In the future, there will be much more challenging situations of this nature."

When your fiercest competitors are publicly backing your stance, you know the stakes are high.

What This Means For Your Business

If you're building with AI or planning to, this standoff matters more than you might think. Here's why:

-

If you're building AI products: The rules of the game are being written right now. What AI companies can and can't refuse will shape what capabilities are available to commercial users. A heavily regulated, government-directed AI ecosystem means less innovation reaching the market.

-

If you're buying AI solutions: Provider lock-in just got more complicated. If the Pentagon can threaten to nationalize AI companies that don't comply with deployment demands, your SaaS vendor's roadmap might suddenly become a matter of national security policy—not product-market fit.

-

If you're evaluating AI strategy: The window for choosing AI providers based purely on technical capabilities is closing. Organizational values, governance structures, and willingness to push back on government overreach are now strategic considerations. Do you want to build on infrastructure from a company that'll fold when pressured, or one that'll draw lines?

The Bigger Picture

This is the first time we're seeing what happens when cutting-edge AI development collides with national security priorities. It won't be the last.

The Defense Production Act was designed for wartime manufacturing—steel, aircraft, medical supplies. Using it to compel AI model deployment is a category shift. If it works, every government with tech companies in its jurisdiction has a new lever.

China's already there—AI companies operate under the assumption that the state has final say. The EU is moving toward heavy regulation through the AI Act. The question isn't whether governments will assert control over AI development. The question is what that control looks like and whether AI companies have any negotiating power left.

Anthropic and OpenAI just showed two different ways to answer that question. One company refused and absorbed the threat. The other negotiated boundaries and made them public. Both approaches matter.

What to Watch Next

Does the Pentagon actually invoke the Defense Production Act, or was the threat enough? Does Anthropic back down or double down? Do other AI labs publicly align with either approach?

More importantly: does this standoff establish a template? If OpenAI's negotiated constraints become the industry standard, that's a meaningful limit on military AI deployment. If Anthropic's refusal triggers actual nationalization, the rules change for everyone.

The technology you're building with today might be governed by regulations negotiated in board rooms and press releases this week. Pay attention.

Build AI Systems You Can Actually Control

At AI Agents Plus, we help businesses deploy AI that works for them—not the other way around. Whether you need:

- Custom AI Agents — Autonomous systems that handle real workflows while staying within your governance requirements

- Rapid Prototyping — Move from concept to working system in days, not quarters

- Voice AI Solutions — Conversational interfaces that actually solve user problems

We build AI systems that deliver ROI without betting your company on someone else's policy decisions.

Ready to explore what AI can do for your business? Let's talk →

About AI Agents Plus Editorial

AI automation expert and thought leader in business transformation through artificial intelligence.