Google's Gemini 3.1 Pro: The Race for Advanced Reasoning Heats Up

Google launches Gemini 3.1 Pro with improved core reasoning capabilities. As foundation models become commoditized, reasoning is the new battleground—and Google is betting big on it.

Google just raised the stakes in the AI reasoning race.

The company launched Gemini 3.1 Pro this week with what it calls a "step forward in core reasoning," rolling out across the Gemini app and NotebookLM. While incremental model updates are standard fare in AI, this release signals something bigger: the shift from "bigger models" to "smarter models" as the primary axis of competition.

What's New in Gemini 3.1 Pro

According to Google's announcement, Gemini 3.1 Pro is designed for tasks where simple answers aren't enough:

"3.1 Pro is designed for tasks where a simple answer isn't enough, taking advanced reasoning and making it useful for your hardest challenges. This improved intelligence can help in practical applications — whether you're looking for a clear, visual explanation of a complex topic, a way to synthesize data into a single view, or bringing a creative project to life."

Key capabilities:

- Advanced reasoning — Better at multi-step logical inference

- Visual explanations — Can break down complex concepts with visual aids

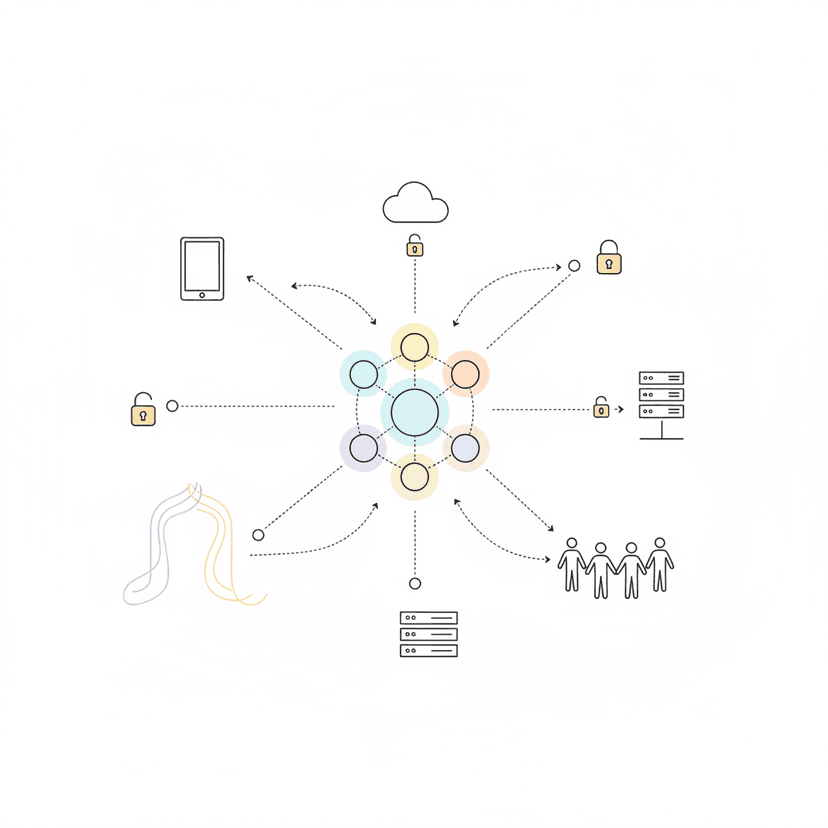

- Data synthesis — Combining information from multiple sources into coherent analysis

- Creative applications — Helping with complex creative projects requiring planning and reasoning

The model is rolling out starting today in the Gemini app and NotebookLM, making it immediately accessible to millions of users.

Why Reasoning Is The New Battleground

For the last two years, the AI arms race was about scale: more parameters, more training data, more compute. GPT-4 had 1.7 trillion parameters. Claude 3 Opus matched or exceeded it. Everyone was building bigger.

That era is ending. Not because scale doesn't matter — it does — but because:

-

Scale has diminishing returns — The jump from GPT-3 to GPT-4 was dramatic. GPT-4 to GPT-4.5? Less so. Going from 100B to 1T parameters doesn't automatically make models 10x better.

-

Training costs are prohibitive — Frontier models already cost $100M+ to train. Doubling parameter count more than doubles cost, but doesn't double usefulness.

-

Inference costs matter — Bigger models are more expensive to run. For production applications, a 10% improvement in quality isn't worth a 2x increase in API costs.

-

Reasoning capability is what users actually need — Most real-world AI applications require multi-step planning, synthesis of complex information, or logical inference. Raw knowledge matters less than the ability to think through problems.

Google's focus on reasoning over raw scale is smart positioning. If you can deliver better problem-solving capability without proportionally scaling parameters, you win on both performance and economics.

The Technical Angle

What does "improved core reasoning" actually mean?

In technical terms, reasoning capability breaks down into several dimensions:

Logical inference — Can the model follow multi-step reasoning chains without losing track? Example: "If A implies B, and B implies C, what can we conclude about A and C?"

Abstraction — Can it identify patterns across different contexts and apply principles from one domain to another?

Synthesis — Can it combine information from multiple sources into coherent, non-contradictory analysis?

Planning — Can it break complex goals into achievable steps and adapt when plans fail?

Gemini 3.1 Pro's improvements likely come from:

- Better training data curation — Including more examples of complex reasoning rather than just factual knowledge

- Reinforcement learning — Training the model to value correct reasoning processes, not just correct answers

- Architecture improvements — Optimizing how the model maintains context across long reasoning chains

Google hasn't released technical details, but their emphasis on "visual explanations" and "data synthesis" suggests they're optimizing for coherence and structure, not just raw performance on benchmarks.

What This Means For Your Business

If you're using or evaluating AI for business applications, the shift to reasoning-focused models matters:

-

If you're building AI products: Test your use cases on Gemini 3.1 Pro, especially if they involve multi-step workflows, data analysis, or complex problem-solving. Don't just look at output quality—measure consistency across similar tasks. Reasoning models should show more stable performance.

-

If you're buying AI solutions: Ask vendors which models they're using and why. "We use GPT-4" isn't a strategy. The question is: which model is best for your specific use case? For analysis-heavy applications, reasoning-optimized models like Gemini 3.1 Pro may outperform general-purpose frontier models.

-

If you're evaluating AI strategy: Don't assume "bigger is better." For many business applications—financial analysis, strategic planning, research synthesis—a smaller, reasoning-optimized model will outperform a larger general model while costing less to run. Evaluate models on task-specific performance, not marketing claims.

The AI market is maturing. In 2023, you could win by having any LLM integration. In 2026, you win by choosing the right model for each task and optimizing your workflows around model strengths.

The Competitive Picture

Google isn't alone in pushing reasoning capabilities:

- OpenAI's o1 models are explicitly designed for complex reasoning, showing significant improvements on math, coding, and logical inference tasks

- Anthropic's Claude 3.5 Opus emphasizes structured thinking and analysis

- Meta's Llama 3.2 includes reasoning optimizations for open-source deployments

But Google has distribution advantages. Gemini 3.1 Pro launching in the Gemini app means it reaches:

- Millions of Google Workspace users

- NotebookLM users (which has become surprisingly popular for research synthesis)

- Android users with on-device AI features

- Enterprise customers already in the Google Cloud ecosystem

If Gemini 3.1 Pro delivers on its reasoning promises, that distribution could translate to significant market share in business AI applications.

Looking Ahead

The race for better reasoning is just beginning. Expect:

- Specialized reasoning models — Different models optimized for different reasoning types (mathematical, spatial, causal, etc.)

- Hybrid systems — Using lightweight models for simple tasks and reasoning-heavy models only when needed

- Reasoning benchmarks — Better ways to measure and compare reasoning capability beyond generic leaderboards

- Explainable reasoning — Models that show their reasoning process, not just final answers

The companies that figure out how to deliver reliable, cost-effective reasoning at scale will dominate the next phase of AI deployment. Google is making its bet with Gemini 3.1 Pro.

If you're building AI applications that require more than surface-level responses, pay attention.

Build AI That Works For Your Business

At AI Agents Plus, we help companies move from AI experiments to production systems that deliver real ROI. Whether you need:

- Custom AI Agents — Autonomous systems that handle complex workflows, from customer service to operations

- Rapid AI Prototyping — Go from idea to working demo in days using vibe coding and modern AI frameworks

- Voice AI Solutions — Natural conversational interfaces for your products and services

We've built AI systems for startups and enterprises across Africa and beyond.

Ready to explore what AI can do for your business? Let's talk →

About AI Agents Plus Editorial

AI automation expert and thought leader in business transformation through artificial intelligence.