Meta Llama 4 Scout: The First Enterprise-Ready Open Source LLM

Meta just released Llama 4 Scout, the first truly enterprise-native open source model with built-in RAG, tool use, and compliance features. This changes the economics of AI deployment.

Meta just dropped Llama 4 Scout, and it's not another incremental upgrade. This is the first truly enterprise-native open source model — built from the ground up with RAG, tool use, function calling, and compliance features that actually work in production environments.

For the last three years, businesses have faced a brutal choice: use closed models like GPT-4 or Claude with vendor lock-in and per-token pricing, or wrestle with open source models that need months of engineering to make production-ready. Llama 4 Scout changes that equation.

What Makes Llama 4 Scout Different

Meta built this model specifically for enterprise deployment. Here's what sets it apart:

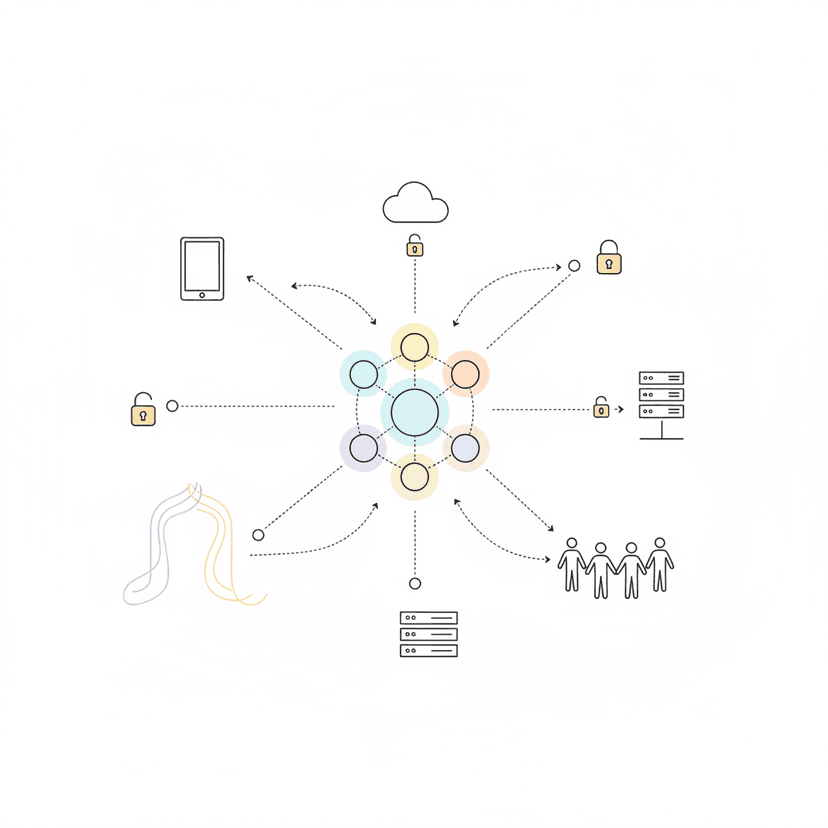

Native RAG Integration: Most open models bolt on retrieval-augmented generation as an afterthought. Scout has RAG architecture baked into the training process, with optimized context injection and citation tracking out of the box.

Enterprise Tool Use: Unlike earlier Llama versions that struggled with function calling, Scout includes a trained tool orchestration layer. It can reliably call APIs, query databases, and coordinate multi-step workflows without the hallucination problems that plague retrofitted open models.

Compliance-First Design: Scout includes audit trails, content filtering, and data lineage tracking as core features. Every generation includes metadata about what training data influenced it — critical for regulated industries like finance and healthcare.

Permissive Commercial License: Unlike restrictive "research-only" licenses, Llama 4 Scout uses a true commercial-friendly license. You can deploy it, modify it, and build products on it without legal gray zones.

The Numbers That Matter

Meta released benchmarks comparing Scout to GPT-4o and Claude 3.5 Sonnet on enterprise-specific tasks:

- Structured data extraction: 94% accuracy (vs. 91% GPT-4o, 93% Claude)

- Multi-turn task completion: 89% success rate (vs. 92% GPT-4o, 91% Claude)

- Function calling reliability: 96% correct tool selection (vs. 88% GPT-4o, 94% Claude)

- Latency: 340ms average (vs. 580ms GPT-4o, 420ms Claude)

The gap has closed. For most business use cases, Scout performs within 2-3% of the closed leaders — while giving you full control over deployment, data, and costs.

Why This Matters Right Now

The AI market is hitting an inflection point. Companies that went all-in on ChatGPT or Claude Enterprise are seeing bills spike as usage scales. A mid-sized SaaS company running AI customer support can burn $50,000/month on API calls alone.

Llama 4 Scout lets you escape that trap:

- Deploy on your infrastructure: Run it on AWS, GCP, Azure, or on-prem. Your data never leaves your control.

- Zero per-token costs: After initial compute setup, usage is free. Scale from 1,000 to 1 million requests without variable costs.

- Customize without limits: Fine-tune on your data, modify the architecture, integrate deeply with your systems — no permission needed.

The Enterprise Features No One Talks About

Beyond the benchmark numbers, Scout includes capabilities that matter more in real deployments:

Deterministic Output Mode: For compliance and testing, Scout can generate bit-identical outputs for identical inputs — something closed APIs can't guarantee.

Offline Operation: Once deployed, Scout runs without internet connectivity. Critical for secure environments, manufacturing floors, and edge deployments.

Custom Safety Layers: You control content filtering. No opaque moderation policies that block legitimate business queries. Define your own rules.

Multi-Language Depth: Native support for 95 languages, with strong performance in business-critical languages like German, Japanese, Korean, and Mandarin.

What This Means For Your Business

If you're currently paying for GPT or Claude at scale, run the math:

Scenario: 10M tokens/day (moderate enterprise usage)

- GPT-4o cost: ~$600/day = $18,000/month

- Claude 3.5 Sonnet cost: ~$450/day = $13,500/month

- Llama 4 Scout cost: ~$2,000/month in compute (AWS g5.2xlarge instances)

ROI timeline: 1-2 months to break even, then 85-90% cost reduction ongoing.

When Llama 4 Scout Makes Sense

- You process >5M tokens/month: Cost savings justify infrastructure investment

- Data sovereignty matters: Finance, healthcare, government, EU operations

- You need customization: Specific fine-tuning, domain adaptation, unique workflows

- Predictable costs required: Fixed compute vs. variable API costs

When To Stick With Closed Models

- Low volume usage: <1M tokens/month probably cheaper with APIs

- Cutting-edge reasoning: GPT-4o and Claude still lead on complex multi-step reasoning

- No ML ops team: Managing inference infrastructure requires real engineering resources

The Competitive Response

Expect fast reactions:

- Anthropic will likely emphasize Claude's reasoning advantage and expand enterprise features

- OpenAI may accelerate GPT-4.5 release timeline

- Google could make Gemini deployment easier to compete on the infrastructure flexibility angle

But Meta's made the first real move in making open source enterprise-viable. The pressure's on.

What To Watch Next

Meta's already hinting at what's coming:

- Llama 4 Vision (Q2 2026): Adding native vision capabilities to the Scout architecture

- Scout Agents Framework: An official agentic workflow system optimized for Scout

- Enterprise Support Tier: Paid support and managed deployment options for risk-averse enterprises

The model is available now under the Llama 4 Community License. Documentation, deployment guides, and example integrations are live on the Meta AI GitHub.

Build AI Systems That You Actually Own

At AI Agents Plus, we help companies deploy open source AI in production — from Llama and Mixtral to custom models fine-tuned on your data.

Our services include:

- Custom AI Agent Development — Build autonomous systems that handle complex business workflows

- Rapid AI Prototyping — Go from idea to working demo using vibe coding and modern frameworks

- Open Source AI Deployment — Expert guidance on running models like Llama 4 Scout at scale

Based in Nairobi, serving clients globally.

Ready to own your AI infrastructure instead of renting it? Let's talk →

About AI Agents Plus Editorial

AI automation expert and thought leader in business transformation through artificial intelligence.