Dell Brings Autonomous AI Agents to the Desktop: Enterprise Hardware Finally Catches Up

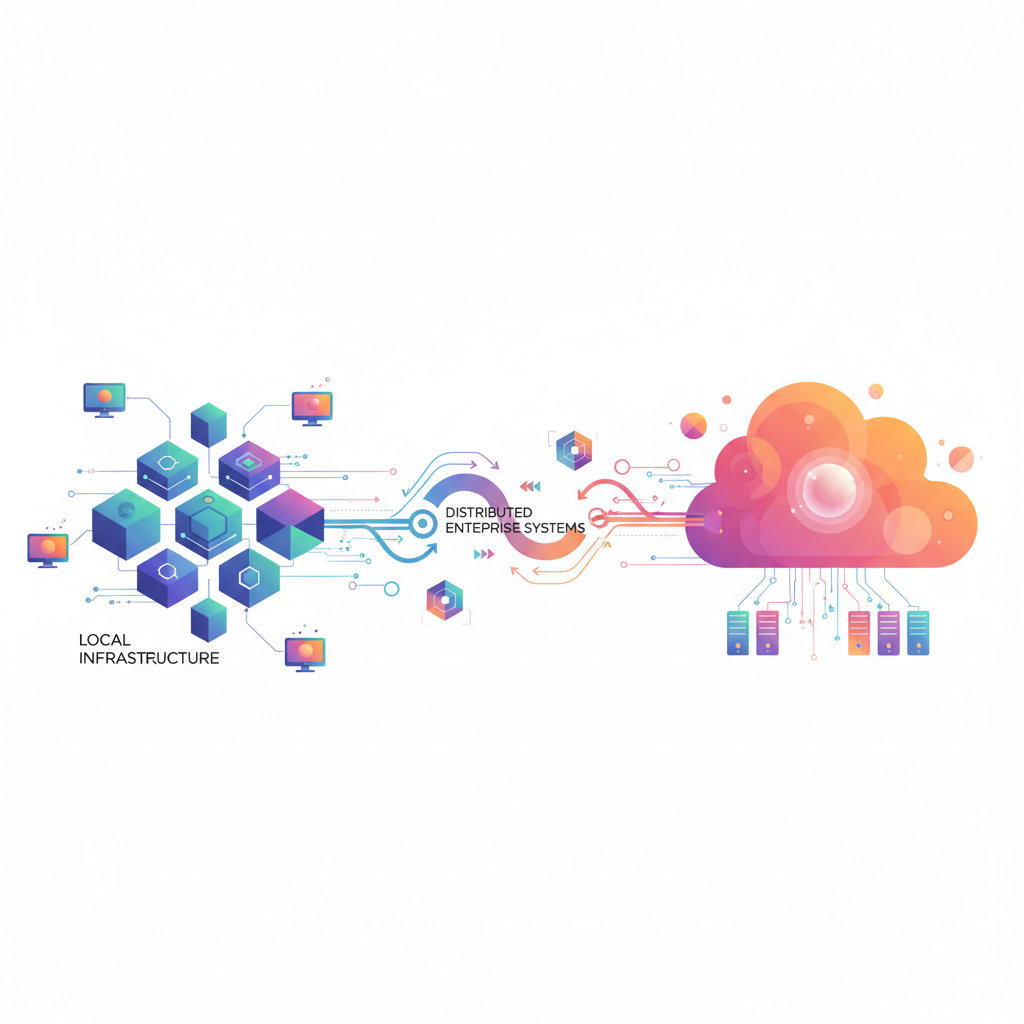

Dell's new desktop supercomputers powered by NVIDIA Grace Blackwell mark a watershed moment: autonomous AI agents are moving from the cloud to local enterprise infrastructure. Here's why that matters for your business.

Dell just announced something the AI agent industry has been waiting for: desktop hardware powerful enough to run autonomous, self-evolving AI agents locally. The Dell Pro Max with GB10 and GB300, powered by NVIDIA's Grace Blackwell architecture, aren't just faster workstations—they're purpose-built infrastructure for the next wave of enterprise AI.

This matters because until now, running sophisticated AI agents meant choosing between cloud costs, latency, and data sovereignty. That tradeoff is about to change.

What Dell Actually Built

The Pro Max line features NVIDIA's Grace Blackwell processors—a combination of ARM-based Grace CPUs and Blackwell GPUs designed specifically for AI workloads. These aren't gaming rigs with AI marketing slapped on. Dell and NVIDIA architected these systems to handle the memory bandwidth, parallel processing, and thermal management that autonomous AI agents demand.

Key specs that matter:

- Grace Blackwell SoC: ARM CPU cores + Blackwell GPU cores on the same chip (eliminates CPU-GPU bottleneck)

- Unified memory architecture: Agents can access both CPU and GPU memory pools without copying data

- Local model inference: Run 7B-70B parameter models at production speed without cloud roundtrips

- NVIDIA Agent Toolkit integration: Native support for NemoClaw and OpenShell agent frameworks

This is the first time enterprise-grade local AI agent infrastructure ships as a standard desktop configuration. Not a server rack. Not a cloud instance. A machine that sits under your desk.

Why Enterprises Need Local AI Agent Hardware

The cloud-first AI strategy made sense when we were running single inference calls. But autonomous agents are different—they make dozens or hundreds of LLM calls per task, maintain state across conversations, and need sub-100ms response times for voice and real-time applications.

Running that workload in the cloud gets expensive fast. More importantly, it creates four problems Dell's local approach solves:

1. Data Never Leaves Your Network

For financial services, healthcare, and legal firms, sending customer data to external APIs is a compliance nightmare. Local agents process everything on-premises. Your trade secrets, client communications, and proprietary data stay on hardware you control.

2. Latency Drops to Single-Digit Milliseconds

Cloud inference adds 50-200ms minimum. For voice AI customer service systems or real-time decision agents, that's the difference between natural conversation and awkward pauses. Local inference runs in <10ms.

3. Cost Structure Shifts from Variable to Fixed

Cloud AI bills scale with usage—more agent calls mean higher costs. With local hardware, you pay once for the machine and run unlimited inferences. For high-volume applications, the Dell Pro Max pays for itself in months versus paying per-token cloud pricing.

4. No Internet Dependency

Local agents work during internet outages, in air-gapped environments, and in edge locations with poor connectivity. Manufacturing floors, hospitals, field service—anywhere uptime matters more than cloud convenience.

The NVIDIA Agent Ecosystem Play

This hardware launch coincides with NVIDIA's push into autonomous agent platforms. The Agent Toolkit includes:

- NemoClaw: NVIDIA's agent orchestration framework for coordinating multiple AI agents across tasks

- OpenShell: Secure execution environment for agents that need system-level access

- Pre-trained agent models: Fine-tuned versions of Llama, Mistral, and Phi optimized for agent workflows

What makes this ecosystem sticky is that NVIDIA is giving enterprises what they actually want: pre-built components that work together. You're not stitching together LangChain, Semantic Kernel, and custom glue code. You're using tested primitives that NVIDIA supports.

For context, this is similar to what we cover in our guide to AI agent development frameworks—but NVIDIA is bundling the entire stack with hardware certification.

What This Means For Your Business

If you're evaluating AI agent deployments, Dell's announcement changes the decision tree:

You should consider local agent hardware if:

- You handle regulated data (HIPAA, GDPR, financial services)

- Your agents make >10,000 LLM calls per day (cloud costs become prohibitive)

- You need <50ms latency for voice or real-time applications

- You operate in environments with unreliable internet connectivity

- You're building proprietary agent systems with sensitive business logic

Cloud-based agents still make sense if:

- You're prototyping and need flexibility to switch models quickly

- Your agent workload is unpredictable (spiky traffic)

- You want zero infrastructure management overhead

- You're using frontier models (GPT-4, Claude Opus) not available for local deployment

Hybrid is the real answer for most enterprises: Cloud for experimentation and frontier models, local for production workloads with known usage patterns and data sensitivity requirements.

The Bigger Shift: Agents as Infrastructure

Dell partnering with NVIDIA to ship agent-optimized desktops signals that AI agents are moving from experimental to infrastructure. This is the same pattern we saw with GPU computing—first it was niche, then NVIDIA built the ecosystem, then Dell/HP/Lenovo shipped it as standard hardware.

The companies getting ahead are the ones treating AI agent development as a core competency, not an IT experiment. Local hardware enables production deployment at scale, but you still need the expertise to build agents that actually work.

Technical Considerations Before You Buy

If you're considering the Dell Pro Max for agent workloads, here's what to validate:

-

Model size compatibility: Grace Blackwell can run 70B parameter models, but barely. For production, stick to 7B-34B models unless you're doing batch processing.

-

Agent framework lock-in: NVIDIA's toolkit works best with their hardware. If you've built agents on AWS Bedrock or Azure AI, you'll need to refactor.

-

Cooling and power: These machines pull 400-600W under load. Make sure your office power and HVAC can handle it.

-

Skills gap: Running local AI infrastructure means your team needs MLOps capabilities—model deployment, monitoring, version management. Cloud platforms handle this for you.

-

Update cadence: Agent frameworks are evolving fast. Local hardware means you're responsible for keeping software dependencies current.

What Happens Next

Dell's launch is the first domino. Expect:

- Lenovo and HP equivalents within 6 months (they won't cede the enterprise market to Dell)

- Apple Silicon agent optimizations as Apple pushes its Neural Engine for on-device AI

- Agent-specific benchmarks replacing generic GPU benchmarks ("agents per second" will become a metric)

- Hybrid deployment tools that split workloads between local and cloud based on data sensitivity

The enterprises that win over the next two years will be the ones who figure out their agent infrastructure strategy now—before it becomes a competitive requirement.

For regulated industries, local agent hardware isn't optional anymore. It's the only way to deploy autonomous AI at scale without violating compliance frameworks. Dell just made that option real.

Build AI Agents That Work On Any Infrastructure

At AI Agents Plus, we help businesses deploy AI agents on whatever infrastructure makes sense for their use case—cloud, local, or hybrid. Our expertise includes:

- Custom AI Agent Development — We build autonomous agents for customer service, operations, and knowledge work

- Infrastructure Planning — Cloud vs local vs hybrid? We help you choose based on your data, latency, and cost requirements

- Voice AI Systems — Natural conversation interfaces optimized for low-latency local deployment

We've built AI agent systems for companies across Africa and globally, from startups to enterprises.

Ready to deploy AI agents that deliver ROI? Let's talk about your use case →

About AI Agents Plus Editorial

AI automation expert and thought leader in business transformation through artificial intelligence.